It Starts With A Simple Premise.

I Wonder…

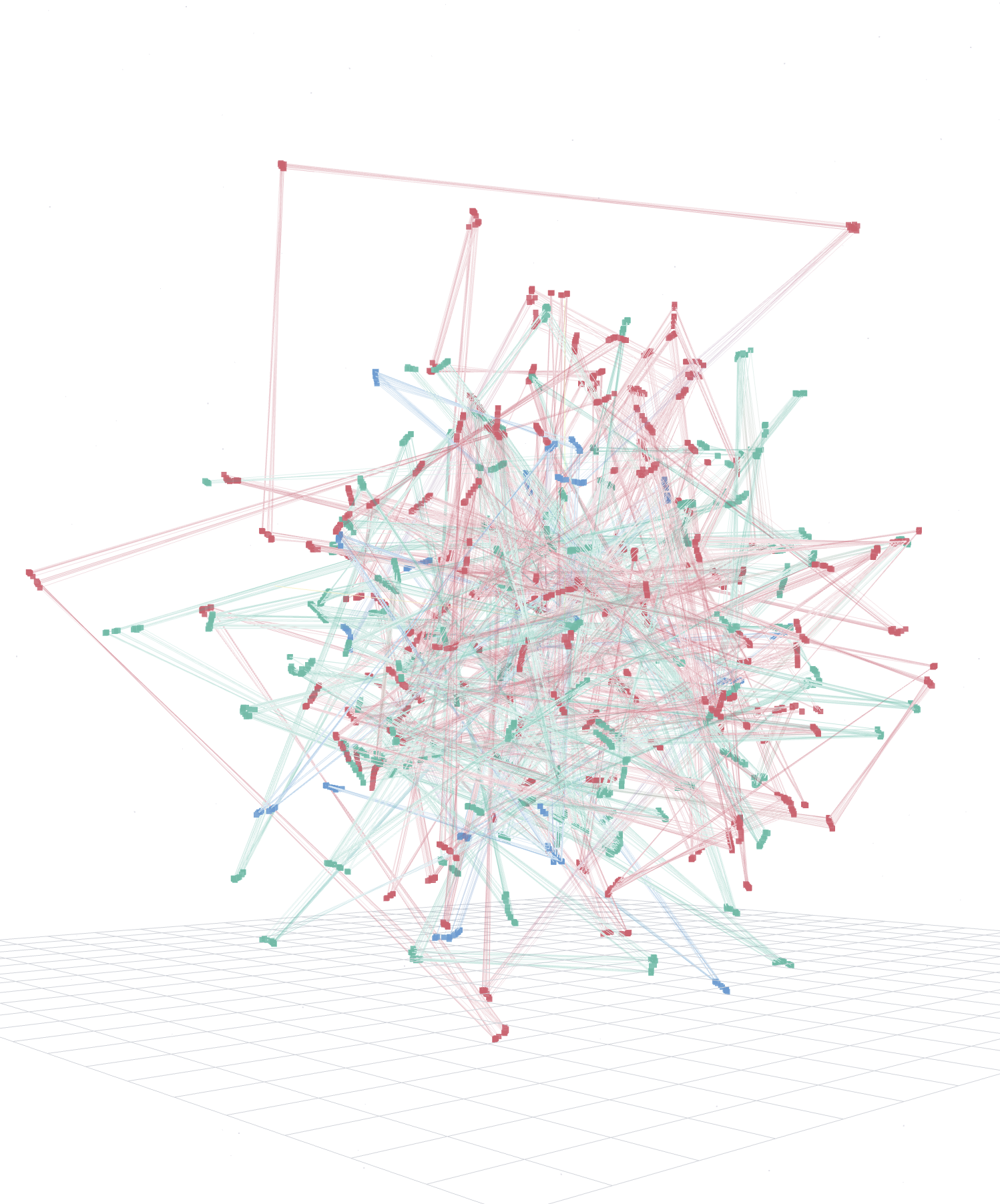

The Universal Topology Router

The Topology-Aware Geometric Router (TAG-R) is a geometric routing engine that treats network topology as the primary signal, not surface-level metadata. It decouples information from its domain semantics, routing complex relationships into the geometric spaces that match their true structure — hyperbolic for hierarchies, spherical for cycles, Euclidean for flat associations. By quantifying structural dissonance — the friction between what a node claims to be and how it geometrically behaves — TAG-R exposes sophisticated anomalies that traditional systems miss.

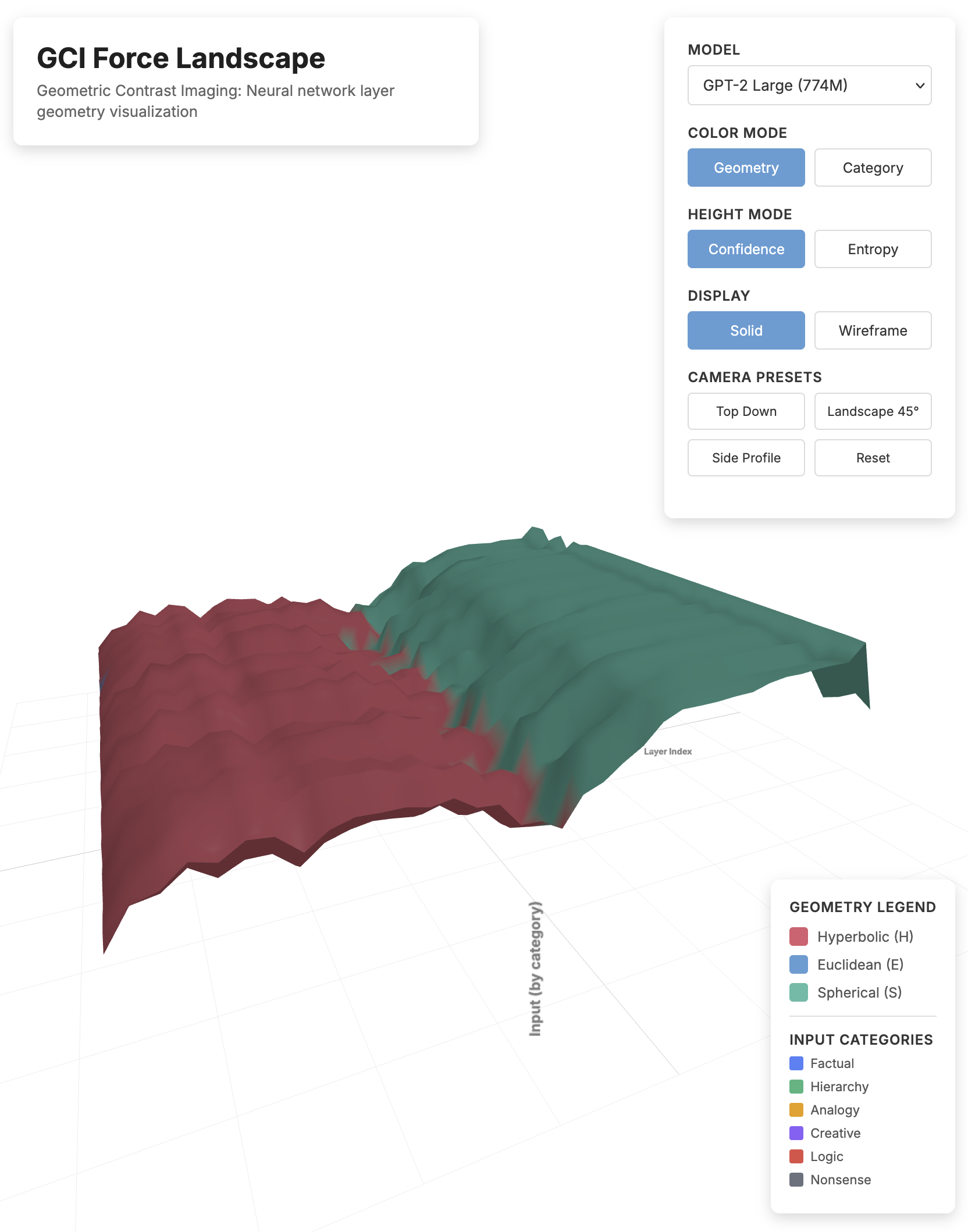

Geometric Contrast Imaging (GCI)

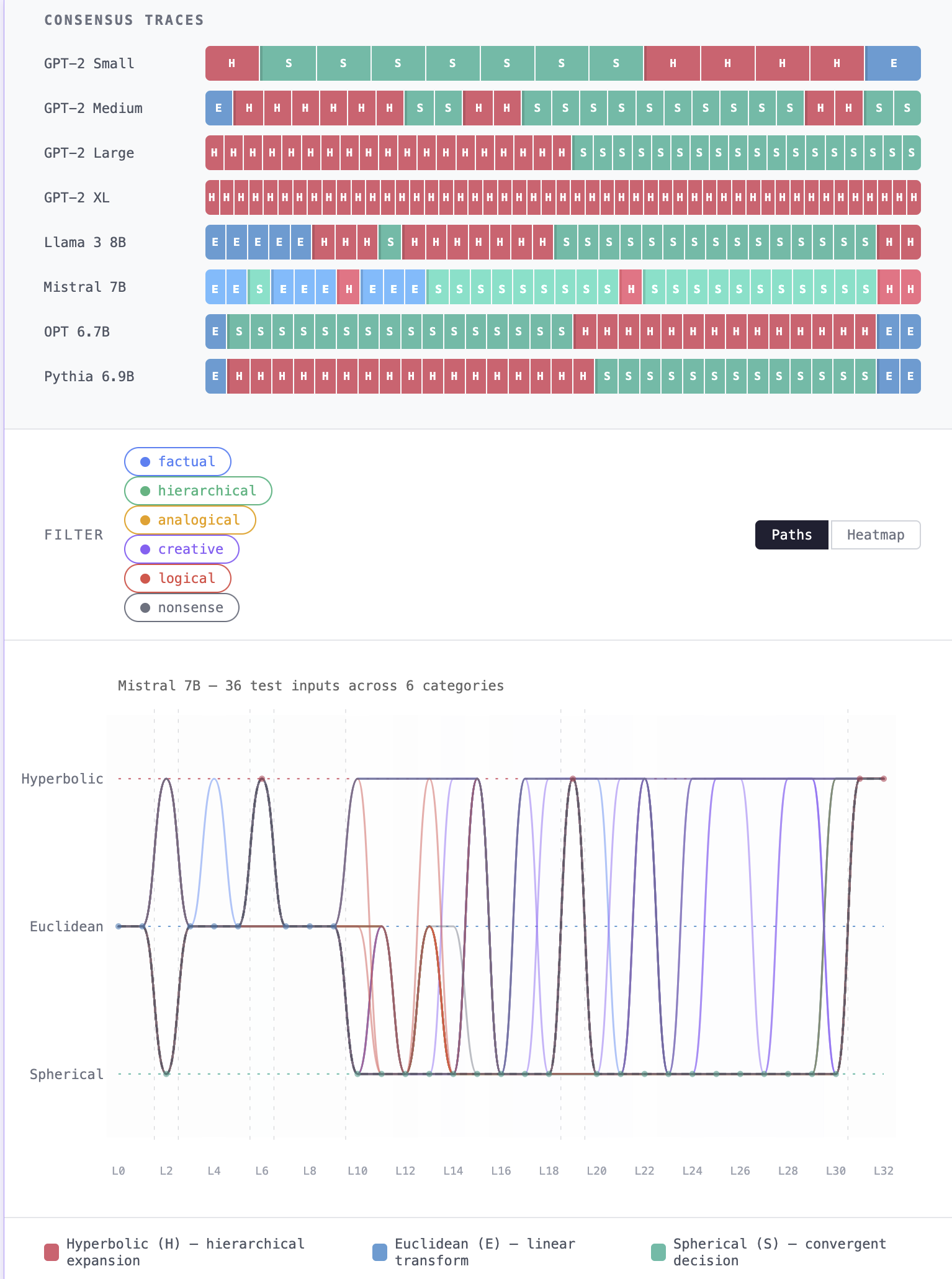

Geometric Contrast Imaging (GCI) is the first calibrated diagnostic instrument for artificial computation. While traditional interpretability attempts to read the semantic content of a neural network—focusing on what a model says—GCI images the computational geometry of how it thinks. By mapping the geometric phase transitions of a computation layer by layer, GCI decouples the structural engine of a thought from its semantic fuel. It does not read the output; it measures the geometric forces required to generate it.

The Emergence of Geometric Phases

If individual attention heads are flat Euclidean atoms, where does the geometry come from?

This paper answers that question. By applying Geometric Contrast Imaging at multiple scales — from individual attention heads to full layers to complete forward passes — I traced the origin of geometric structure in neural computation. The answer is head disagreement. When attention heads align, the layer is geometrically ordered: fast, decisive, transporting information forward. When they disagree, their conflicting routing signals warp the collective geometry into a curved deliberation space. The correlation between head-level entropy and layer-level spherical-phase weight is r = +0.92 — the strongest result in the GCI program.

This resolves a puzzle from mechanistic interpretability: the geometry isn't stored anywhere. No single head carries it. It exists only in the ensemble, the way temperature exists only in a population of molecules, not in any individual one. The computational phases visible in geometric traces — construction, deliberation, resolution, output — are collective phenomena that emerge at the layer scale and vanish when you zoom in to the components.

One unexpected finding: not all tokens participate equally. Function words — "the," "of," "and" — bypass the deliberation phase entirely, riding a syntactic fast-track through the architecture while content words take the full geometric journey. The network doesn't just think in phases; it triages what deserves the expensive geometry and what doesn't.

The Free Energy Geometry of Neural Computation

Why do geometric phases exist at all? The emergence paper showed that they arise from head disagreement. This paper answers why they must.

The answer turns out to be a mathematical identity that connects two independent research programs. The partition function I derived to describe geometric phase transitions in neural networks — a statistical mechanics over attention head configurations — is structurally identical to the variational free energy functional at the core of the Free Energy Principle. The identification is precise: the inverse temperature β that controls phase concentration in my framework is the precision parameter π that controls confidence in predictive processing. They are the same quantity measured from different directions. This yields a normative theory. Geometric phases aren't an accident of training or an artifact of architecture.

They are the mathematically inevitable consequence of any system that minimizes surprise under resource constraints. The spherical deliberation phase — where heads disagree and the geometry curves — is the system maintaining flexibility, exploring alternatives before committing. The hyperbolic resolution phase — where heads align and the geometry sharpens into a tree — is the system collapsing uncertainty into a decision. Every forward pass is a free energy minimization trajectory through geometric space: exploration, then exploitation, written in curvature.

The bridge runs both directions. GCI provides the Free Energy Principle with something it has lacked: a direct, layer-by-layer measurement of precision dynamics inside an inference process. The Free Energy Principle provides GCI with something it lacked: a reason. Phases exist because free energy minimization requires them.

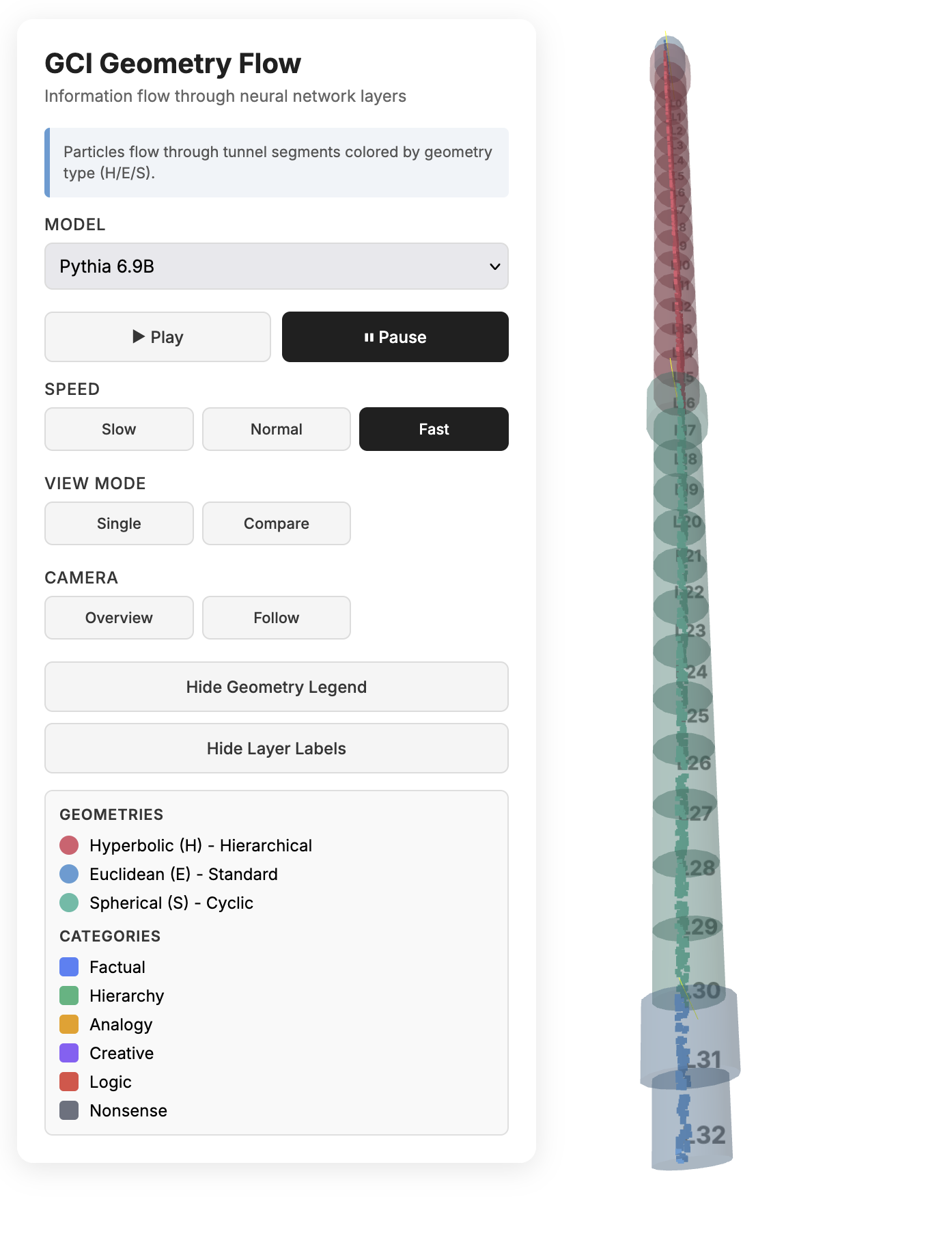

The Dynamics of Geometric Computation

The first four papers established what geometric phases are, where they come from, and why they must exist. This paper asks: what laws govern how they evolve?

By tracking how each layer's geometric state evolves through depth, I identified and validated a five-force equation family that describes how computation accelerates through depth. Five forces shape the trajectory: a coupling force driven by the gradient of geometric agreement between heads, an information pressure that builds exponentially toward the output, an oscillatory force within the deliberation phase, boundary impulses at phase transitions, and a baseline architectural current. The equation family was validated across eight transformer models spanning five architecture families, from 124 million to 8 billion parameters — every model tested obeys the same dynamical law, with architecture-specific coefficients.

The most revealing finding came from a failure. One architecture — the only model with parallel attention and MLP blocks — broke every smooth correction I tried. Fourteen configurations failed. The diagnosis revealed that its deliberation phase follows an autoregressive process with a strongly negative first coefficient: the residuals don't drift, they alternate, a signature consistent with parallel processing streams writing over each other at every layer. A targeted autoregressive filter rescued the model, and a cross-architecture negative control confirmed that no other architecture needs it. The failure wasn't noise. It was the equation family detecting an architectural fingerprint that no other analysis had surfaced.

One universal law emerged across models with sufficient depth: routing entropy anti-correlates with representational velocity at r = -0.84 to -0.87. Layers where the network is uncertain move slowly — they are writing information. Layers where it is confident move fast — they are transporting it. Writing and transport are complementary. The geometry doesn't just describe what kind of computation is happening; it dictates how fast information can travel through it.

"What if intelligence and even memory are not objects stored inside a system, but patterns that emerge from the friction when one geometry meets another?"

— ROD